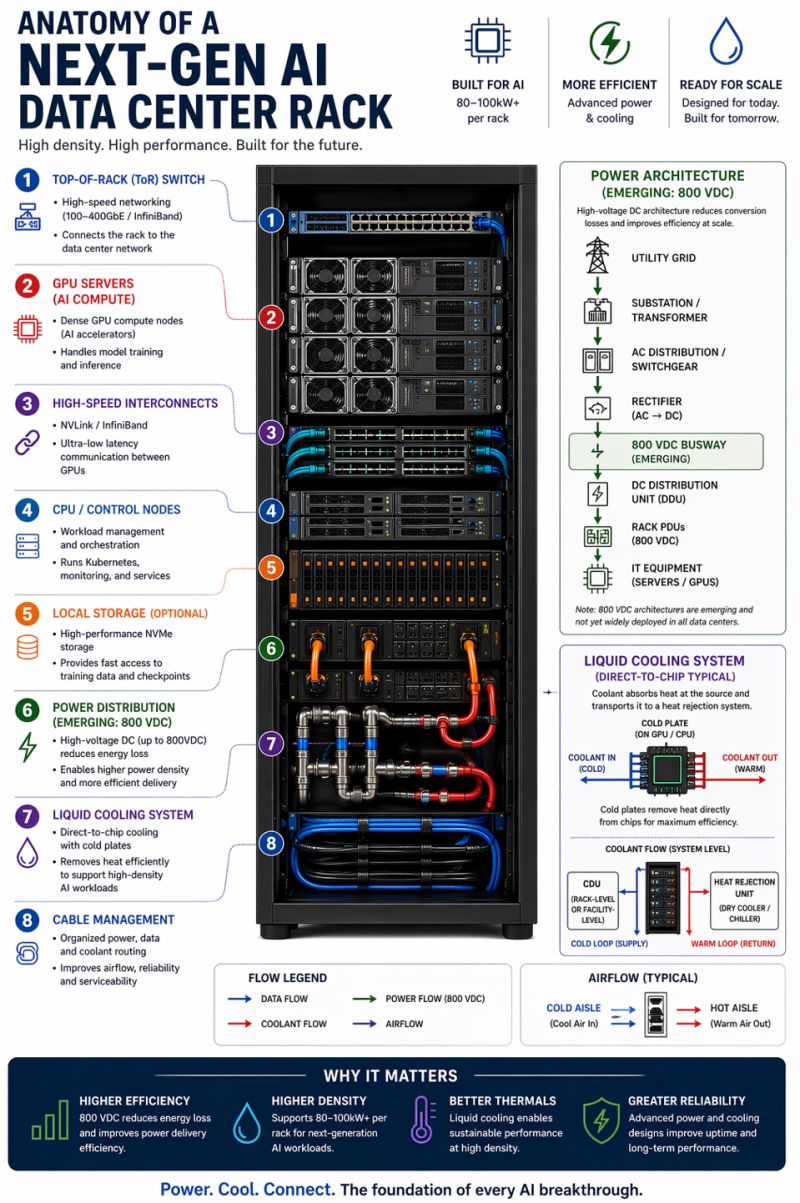

I came across this infographic and spent more time than I expected just reading through it. It’s a good snapshot of how much the anatomy of a server rack has shifted in the last few years.

A rack used to be mostly about compute and storage. CPUs on top, drives somewhere in the middle, some networking at the top, and air blowing through the whole thing. The job of the infrastructure was to stay out of the way of the workload. Now the workload is the infrastructure. GPUs are the centre of gravity, and everything else, power distribution, cooling, interconnects, cable management, is designed around keeping them fed and cold enough to run flat out.

The two things that stand out most to me are power and cooling. On the power side, 800 VDC distribution is emerging as the new standard. High-voltage DC reduces conversion losses and lets you push 80 to 100 kilowatts per rack, which would have been unthinkable not long ago. On the cooling side, air just doesn’t cut it anymore at these densities. Direct-to-chip liquid cooling, with cold plates sitting right on the GPU or CPU and coolant flowing in and out, is becoming the baseline for anything serious.

What I find genuinely interesting is how much the physical engineering has had to evolve just to keep up with the model side. Every time someone announces a new GPU or a bigger training run, there’s a quieter story underneath it about how you actually keep that hardware alive at scale.